DistilGPT2: A Lightweight and Efficient Text Generation Model

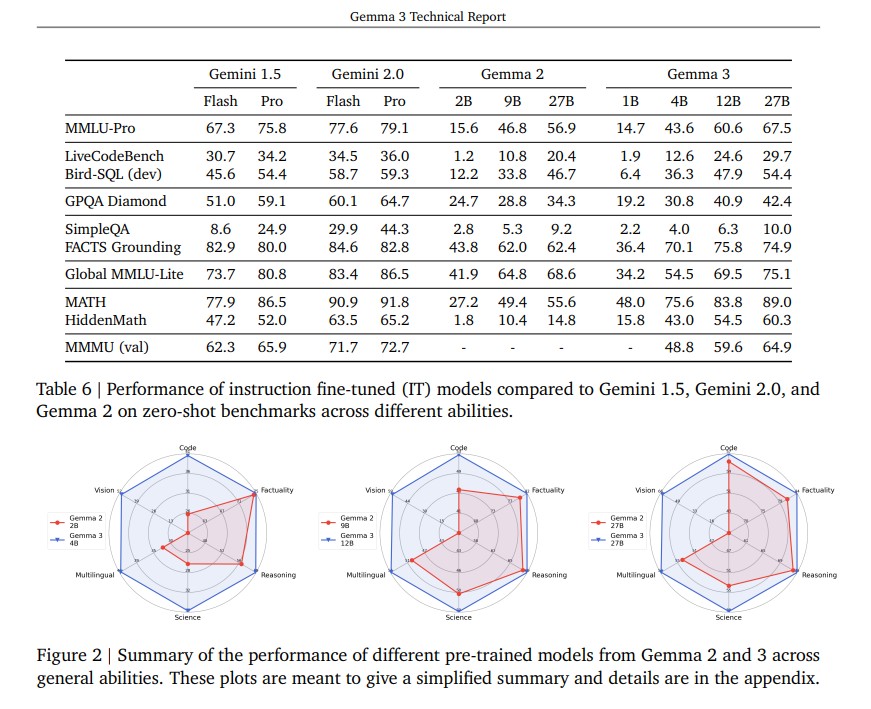

In the rapidly evolving field of artificial intelligence, large language models have transformed how machines understand and generate human language. Models like GPT-2 demonstrated the power of transformer-based architectures but their size and computational demands made them difficult to deploy in resource-constrained environments. To address this challenge, DistilGPT2, a smaller, faster, and more efficient version … Read more